In my previous article I created a command line version of one of my favourite mobile application, Picnic. This made me consider what other apps do I use on a regular basis which I could enhance for my personal use? I am not sure if this is going to be an ongoing series, but lets continue with Too Good To Go.

# Too Good To Go

The Too Good To Go application is a great initiative that allows businesses which typically produce food waste to offer food they can no longer sell at a reduced rate to the public. The application works by scanning your surroundings and listing the businesses that have products which can be purchased that day. It a win for the businesses and consumers as well as a reduction in waste.

# The API

The application has straightforward API, once authenticated users can do the following:

- Search for items

- Manage items (save them as favourites)

- Manage orders

I found it interesting that for all endpoints, responses were compressed using GZIP. This offers many benefits such as faster loading time from reducing the bandwidth, but this might also be motivated by the apps focus towards environmental impact. If your application can reduce the amount of data it transfers that can decrease the energy consumption and associated carbon emissions.

Many of the endpoints such as querying for items, leverage POST to pass content that can be used for performing a search. While this is not unusual (look at GraphQL), a dedicated QUERY keyword for HTTP requests would be highly desirable in this scenario https://www.ietf.org/archive/id/draft-ietf-httpbis-safe-method-w-body-02.html.

Your options for filters are as follows:

body := ItemsQueryRequest{

UserId: c.userId,

Origin: Origin{query.Latitude, query.Longitude},

Radius: query.Radius,

PageSize: query.PageSize,

Page: query.Page,

Discover: query.Discover,

FavoritesOnly: query.FavoritesOnly,

ItemCategories: query.ItemCategories,

PickupEarliest: query.PickupEarliest,

PickupLatest: query.PickupLatest,

SearchPhrase: query.SearchPhrase,

WithStockOnly: query.WithStockOnly,

HiddenOnly: query.HiddenOnly,

WeCareOnly: query.WeCareOnly,

}I suspect if a GET was used, it would produce a pretty large URL and was deemed undesirable.

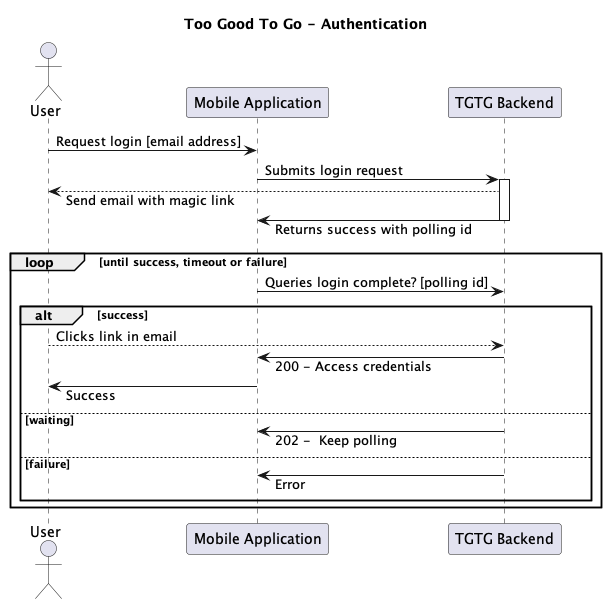

# Authentication

Too Good To Go authentication process is not password based, instead they leverage a ‘magic link’ strategy. When a user wishes to authenticate, the TGTG backend produces a short lived link and sends that to the user’s email. Upon clicking the link, typically the user is re-directed to the application on their phone and they are now authenticated.

If the user however opens the link on a different device (not their phone), the device making the initial request will continue to poll the TGTG backend until it receives a signal that the authentication was successful.

The result of the successful authentication is a user identifier, access token, refresh token and cookie.

The cookie is important as it contains a DataDome value, which servers a similar purpose to recaptcha in trying to mitigate bots.

For all requests that require authentication, the user’s identity, access token and cookie are required. These access token last for four hours and require a refresh when expired.

# So what did I make?

My idea was, I’ll create a script that I could load onto a Raspberry Pi that could on a scheduled interval check certain items and if those items are available send me an email.

I guess you could say the script would wake me up before it was too good to go go.

You might be curious, doesn’t their mobile application already have notifications like this?

The application does offer notifications, however you cannot opt into only availability of items. You also receive notifications regarding feature updates, promotions & ‘more’. Therefore I wanted to have more control over this.

Initialising

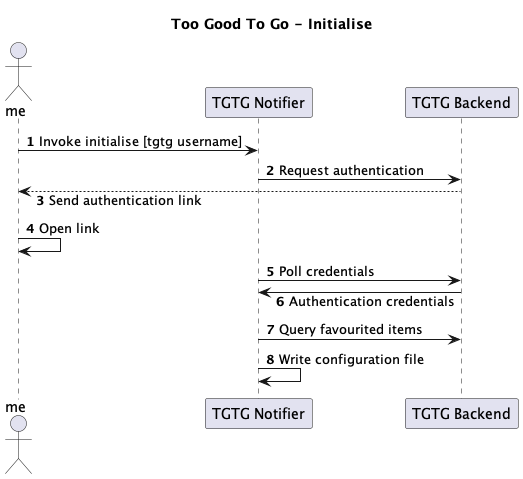

For the script to work I needed to capture authentication data, notification information as well as which items to track.

By using the standard lib flag I could allow the user to initialise the script with the -i flag plus their account email address.

func main() {

initialise := flag.String("i", "", "configure notifier")

flag.Parse()

if *initialise != "" {

log.Println("Starting Initialisation...")

log.Println("You will receive an email to authenticate.")

Initialise(*initialise)

return

}

}The initialiser logic made use of the API’s Authentication flow and upon success produced a json configuration file containing the acquired authentication details, items favourited by the user and an email config

{

"email_config": {

"to": "example@example.com",

"account": "gmail"

},

"credentials": {

"email": "",

"user_id": "9999999",

"access_token": "token",

"refresh_token": "token",

"cookie": "cookie data"

},

"items": [

{

"name": "FEBO",

"item_name": "Snackpakket",

"item_id": "999999",

"notify": true,

"last_notified": "2023-10-09"

},

]

} Checking

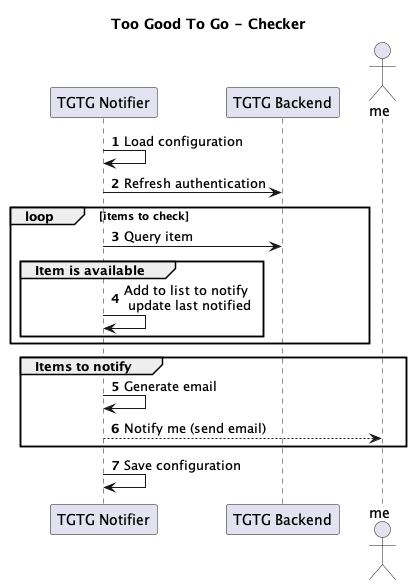

The default behaviour of the script is to loop over the items which are within the config and see if they have new availability and sending all the results as an email.

I decided that a notification should only occur once per item, per day. To achieve this, the config notes the last_notified date in order to perform a notification check.

func ShouldNotify(toCompare string) bool {

if toCompare == "" {

return true

}

currentTime := time.Now().Format("2006-01-02")

current, err1 := time.Parse("2006-01-02", currentTime)

compare, err2 := time.Parse("2006-01-02", toCompare)

if err1 != nil || err2 != nil {

fmt.Println("Error parsing date strings:", err1, err2)

return false

}

return current.Sub(compare) >= 24*time.Hour

}Notifying

My Raspberry Pi by default had sendmail installed, which I considered using. However all emails using this approach, emails got flagged as spam so I opted to use msmtp instead with a dedicated gmail account. I guess my Raspberry Pi could watch YouTube now if it wishes.

func (t *TgtgNotifier) SendNotification(items []*toogoodtogo.Item) error {

log.Println("Preparing email")

emailConfig := t.Config.EmailConfig

emailContent, emailErr := CreateEmail(ParseItems(items))

if emailErr != nil {

return emailErr

}

emailMessage := fmt.Sprintf("To: %s\nSubject: %s\nContent-Type: text/html\n\n%s", emailConfig.To, subject, emailContent)

cmd := exec.Command("msmtp", "-a", emailConfig.Account, emailConfig.To)

cmd.Stdin = strings.NewReader(emailMessage)

cmdErr := cmd.Run()

if cmdErr != nil {

return cmdErr

}

log.Println("Email sent")

return nil

}This also exposed me to one of Go’s best features, templates. The email I wished to send I created the HTML and produced it as a Go HTML template. This allows you to dynamically adjust it.

<table>

<tr>

<th>Store Name</th>

<th>Item Name</th>

<th>Pickup Time</th>

</tr>

<tr>

<!-- Table content -->

</tr>

</table>The HTML above allows a slice to be provided which will be iterated over to populate table rows.

func CreateEmail(content *[]PickupInfo) (string, error) {

tmpl, templateErr := template.New("emailTemplate").Parse(emailTemplate)

if templateErr != nil {

return "", templateErr

}

var output bytes.Buffer

emailContent := struct{ Items *[]PickupInfo }{Items: content}

exeErr := tmpl.Execute(&output, emailContent)

if exeErr != nil {

return "", exeErr

}

return output.String(), nil

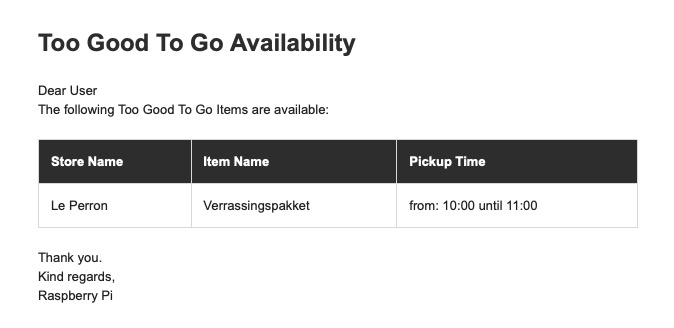

}Here is the end result

Scheduling the Check

Once I was happy with the application I needed to build targeting linux and arm so it could be ran on the pi and copied it over to device.

GOOS=linux GOARCH=arm go build -o bin/tgtg-linux . && scp ./bin/tgtg-linux pi@ip:tgtg/After initialising it, I leveraged the crontab in order to schedule a task to be run every 15 mins to perform the check:

*/15 * * * * cd <location of script> && <script> > <location of script>/logfile.log 2>&1For any possible errors added a log file.

# Done

I now had the notifier I wanted for Too Good To Go, plus got more exposure to different parts of Go.

If you wish to checkout the complete source code, feel free to check them out: